Will Generative AI Drastically Change the World?

- the Evolution required for AI Services and the capacitors Supporting Them - (First Part)

2024/1/24

With the rapid development and popularization of generative AI services, the hardware computational complexity supporting their operation is also increasing dramatically.

Why is computational complexity increasing and how is the hardware evolving to meet these demands? This article introduces the background for the development of generative AI, examples of hardware supporting them, and capacitors that significantly contribute to the performance of computational processing.

Development of Generative AI

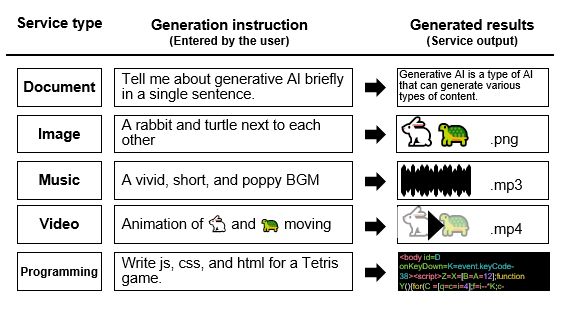

In recent years, we have been hearing the keyword generative AI more and more in the news. Generative AI refers to AI that leverages AI to generate new content. For example, whereas the previous use of AI was to identify images, generative AI plays the role of generating new images based on input information. A typical example of generative AI is "ChatGPT," which has become a hot topic due to its ability to return sophisticated responses to any question. ChatGPT was released to the public in November 2022, and in January of the following year it became the fastest web service in the world to exceed 100 million registered users, attracting a great deal of attention around the world. ChatGPT has sparked the launch of a number of services using generative AI technology. The content of the service is not limited to the application of AI answering questions, but also includes document creation and summarization, drafting of projects, designing and creating music, programming code generation, and more. While the broad scope of the usefulness of generative AI is expected to enrich our lives, it has also generated a number of controversies, including copyright issues and fears that it will eliminate people's jobs. It is also said that the use of generative AI will expand into physical fields (e.g. robots and heavy industry) that handle real objects, and this trend is attracting people's attention every day.

| Conventional AI (Predictive AI) | Generative AI | |

|---|---|---|

| Function | Function Identify, analyze, and predict indicated targets based on data | Generate new content based on data as directed |

| Effect | Identify, analyze, and predict with greater accuracy than humans | Create new content in less time than a human would |

| Application | Identification of documents, images, videos, etc., and prediction through data analysis | Generation of documents, images, videos, etc. |

Mechanism of Generative AI

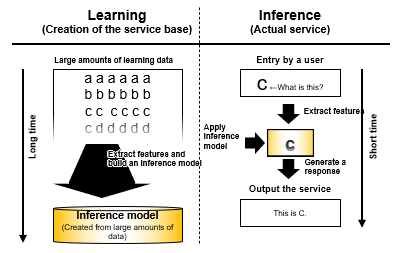

How does generative AI generate new content? This is accomplished roughly through the processes of (1) learning and (2) inference. (Figure 2)

(1) Learning

This is the process performed to build the model to execute the generation. During this process, calculations are performed to capture features of the data using a vast amount of data. As a result, an inference model is constructed to achieve advanced generation. The higher the degree of coverage/accuracy that the service handles, the longer it takes as a trade-off (sometimes several months). In order to launch and update services quickly, learning time is required to be reduced.

(2) Inference

This is the process of executing the generation (actual service). In this process, responses to user input are generated based on inference models obtained through learning. The content generated during this process is the content deemed to have the highest probability of matching the input. Inference is performed in far less time than learning (a few dozen seconds at most) by using inference models. Services with long response times are avoided by users, so it is required that they run as fast as possible, even by a second.

Issues with Generative AI

While generative AI is a highly promising technology due to its wide range of applications and convenience, some practical issues still remain. That is, as mentioned above, because the response content is generated solely on the basis of probability, there are cases where inaccurate content is generated. This phenomenon is called a "hallucination" because the AI outputs plausible lies as if it were hallucinating. What measures are effective to address these issues? One of the more effective methods recognized is to increase the amount of data and the number of computational parameters during the learning process. It has been publicly reported that the accuracy of the generative AI model used in OpenAI's ChatGPT has been improved by using this approach during the evolutionary process.

| Year | 2018 | 2019 | 2020 | 2022 | 2023 | 202X |

|---|---|---|---|---|---|---|

| ver. | 1 | 2 | 3 | 3.5 | 4 | 5 |

| No. of parameters | 117 million | 1.5 billion | 175.0 billion | 355.0 billion | >1.5 trillion*1 | >15 trillion*1 |

| Learning data | 4.5GB | 40GB | 570GB | ?TB | >5TB*1 | >50TB*1 |

Hardware Supporting Generative AI

Currently, ChatGPT and many other generative AI services are provided as web services. Computer processing for executing web services is performed on a large number of servers installed in so-called cloud data centers.

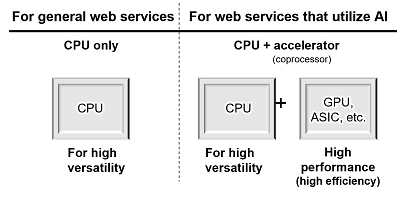

In conventional web services, a single server was usually equipped with one or two CPUs*1 responsible for the main computational processes and only the CPUs were used to perform most processes when executing services. However, during the 2010s, the effect of circuit miniaturization, which was a main factor of improving CPU performance, became less significant and efficiently improving performance became difficult. During this situation, an approach to use accelerators (coprocessor) was thought of. The idea of this approach is to add a different type of high-efficiency processor to a server in order to efficiently improve the processing performance. Use of this system made application of AI that requires huge amount of data processing for web services a reality. As an accelerator for generative AI, GPU*2 or ASIC*3 is selected in general (see Note).

Note: How processors are used in generative AI

*1 CPU (Central Processing Unit)

CPU is essential in a server as it is responsible for the fundamentals of web services including versatile software processing. Meanwhile in specific processes, processors with higher parallel processing performance such as GPU and ASIC can provide higher-efficiency processing than CPU. For this reason, in AI, a combination of these processors in addition to CPU has become common place.

*2 GPU (Graphic Processing Unit)

As its name suggests, its conventional main application was image processing for games. However, as similar parallel processing is used in learning and inference, use of GPU has spread widely in AI data processing since the middle of 2010s. The software flexibility of GPU is also considered attractive for responding to constantly evolving AI algorithms, thus it has gotten favorable reception from many AI researchers and AI service engineers. Therefore, GPU is functioning as a foundation for the evolution and development of generative AI.

*3 ASIC (Application Specific Integrated Circuit)

As these processors are designed for specific applications, they can provide high performance while saving electricity, reducing operation cost, which are considered to be attractive. However, as they are not as flexible as GPU and the processes are fixed to a certain degree, they are developed upon sufficient examination of target processes and development scale. As this development is considered to require a lot of capital and labor, ASIC is utilized mainly by major cloud vendors that have high financial and development abilities. However, there are processor manufacturers that encourage user companies to utilize ASIC by providing sufficient technical support.

Market and Equipment Trends

Regarding learning and inference processing to improve AI performance, demand for improvement in data processing performance of hardware never stops. This is because both higher accuracy services and shorter lead times to provide them are important factors for companies that develop services using generative AI.

| Service element | Expectation of service companies | Hardware task |

|---|---|---|

| ❶ Accuracy | Want to get more users through higher-accuracy AI | Larger-scale data processing is required. →Higher-performance hardware is required. |

| ❷ Time | Want to launch and update services quickly. Want to reduce response times. |

Learning and inference time must be reduced. →Higher-performance hardware is required. |

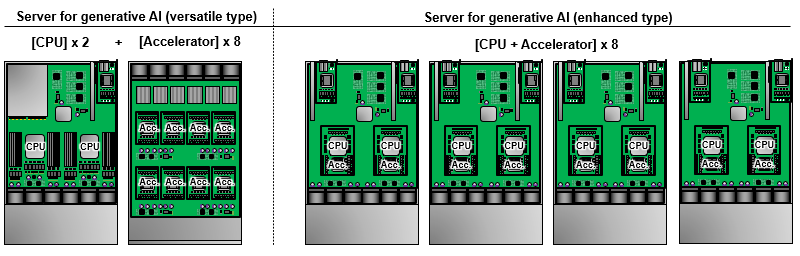

For this reason, generative AI processors have extremely high-end specifications and the number of installed units is increasing. As a typical configuration, a combination of two CPUs and eight accelerators is often seen. In newer hardware, an attempt for higher performance has started. The number of CPUs is increased to eight and both CPUs and accelerators are installed closely or configured within the same processor package in order to improve communication speed between them as well as power efficiency.

There are other approaches for improvement. Examples are the use of mega ASIC for higher learning speeds and implementation of AI processing functions on the processors of mobile devices and embedded devices on the terminal side in order to increase the speed of inference. News of new cases like these are being reported constantly. In any case, an important key for generative AI hardware is the improvement of processor performance utilizing cutting-edge technology and the optimum combination in the system overall. As generative AI rapidly develops, reduction in environmental burden and costs have become extremely important issues regarding hardware as well as software. Not only major companies but also many up and coming manufacturers have launched in this field.

Configuration examples of mainly processors that take a central role in hardware that supports generative AI have been introduced above. In order to operate such high-performance processors in a stable manner, electronic parts used with them are also required to meet high requirements. In the second part, performance required of capacitors that significantly contribute to the power supply of processors will be introduced together with specific solution examples of Panasonic Industry.

Related product information

Related information

- Is it essential to a data center? The reasons why a 48-V power supply is required and the challenges of power supply design (1) -Why is a 48-V power supply required?-

- Is it essential to a data center? The reasons why a 48V power supply is required and the challenges of power supply design (2) -Selection of high-performance capacitors-

- Solving Problems with MLCCs by Adopting Conductive Polymer Capacitors

- Case Examples of Replacing Switching Power Supply Input/Output Capacitors from MLCCs to Hybrid Capacitors